The Mozilla Audio Data API: what do the numbers mean?

In the last few months there has been considerable progress towards enabling programmatic access to audio data in web browsers.

Mozilla has implemented the Audio Data API, which provides read and write access to audio with JavaScript. To use it you'll need Firefox 4 (or later).

The Mozilla API makes available data for audio from an HTML audio or video element: numbers that describe an audio signal.

It's simple to use, but what do the numbers actually mean?

Contents

Getting started

Just to reiterate: you'll need Firefox 4 (or later) for the examples below.

To use the Mozilla audio data API, set an event listener on an audio or video element:

mediaElement.addEventListener('MozAudioAvailable', handleAudioData, false);

...with a callback like this:

function handleAudioData(event) {

var frameBuffer = event.frameBuffer;

var time = event.time;

// do something...

}

The MozAudioAvailable event has two attributes:

- frameBuffer: an array of numbers representing decoded audio sample data

- time: the timing in seconds of the first item in the framebuffer, relative to the start of the audio stream, i.e. the time the first framebuffer sample was taken.

The audio API also makes data available for the audio or video element � in other words, the audio or video as a whole � once the loadedmetadata event has been fired:

mediaElement.addEventListener("loadedmetadata", handleMetadata, false);

function handleMetadata(event){

var numChannels = mediaElement.mozChannels;

var sampleRate = mediaElement.mozSampleRate;

var frameBufferLength = mediaElement.mozFrameBufferLength;

}

The audio API adds three new attributes to the loadedmetadata event:

- mozChannels: which may be 1 (mono), 2 (stereo), 6 (5.1 'surround sound') or some other value.

- mozSampleRate: the number of samples each second encoded in the audio

- mozFrameBufferLength: the number of samples in each framebuffer.

What does sound look like?

A sound can be represented as a graph:

- time on the horizontal (x) axis

- amplitude on the vertical (y) axis: the larger the amplitude, the louder the sound.

Pitch corresponds to frequency: how 'squashed' the graph is horizontally: the faster the amplitude changes over time, the higher the frequency.

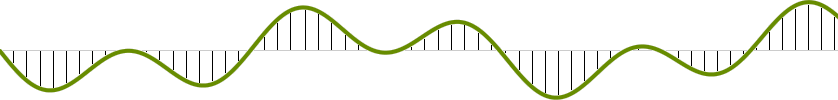

The sampling rate for a digital sound recording is the number of times the amplitude represented by this graph is measured each second.

For CD audio, this value is 44,100, which means that 44,100 data values are encoded each second, which can be written as 44.1kHz. (Hz is an abbreviation of Hertz � which means the number of times, or cycles, per second.) 44.1kHz recording enables good sound reproduction � the human ear is capable of discerning frequencies between around 20Hz and 20kHz. (The sampling rate for telephones is 8kHz.)

Each framebuffer read using the Mozilla Audio Data API is an array representing a sound as it changes during a (short!) period of time. (This can be confusing if you're used to working with video frames.)

Each value in each framebuffer array represents an audio sample: the y coordinate of the 'sound graph' measured at a point in time. Values are normalised to between -1 and 1, no matter how many bits of digital information are recorded for each audio sample � otherwise, if sample values were represented as integers, developers would need to know the potential maximum and minimum values, which would depend on the bit depth of the source.

By default, each framebuffer array consists of 1024 sample values for each channel. For stereo audio this means that by default each framebuffer array has a total of 2048 values: the number of values per channel (1024) multiplied by the number of channels (2).

The length of each framebuffer array can be adjusted by setting the mozFrameBufferLength value for the media element. This can be done in a loadedmetadata event handler; any value between 512 and 16,384 can be used. A larger mozFrameBufferLength value means that each framebuffer array has a larger number of sample values � so each framebuffer represents a longer time period � and vice versa.

In other words:

- a larger mozFrameBufferLength means each framebuffer array has more values, represents a longer time period, and therefore the MozAudioAvailable event is fired less frequently with more data

- a smaller mozFrameBufferLength means each framebuffer represents a smaller time period, so the MozAudioAvailable is event fired more frequently with less data

For example:

- for stereo audio with the default framebuffer array length of 2048, there are 1024 sample values for each channel in each framebuffer array

- with a sampling rate of 44,100 samples per second, each value in each framebuffer array represents a time period of 1/44,100 seconds

- therefore, since each sample represents 1/44,100th of a second, each framebuffer in this case represents 1024 x 1/44,100 seconds, i.e. around 0.023 seconds � so, with default values, the MozAudioAvailable event is fired 44 times per second.

If mozFrameBufferLength is set to 16,384 (the maximum), each framebuffer for a stereo recording will have 16,384/2 = 8,192 values per channel. Therefore each framebuffer holds values for a total of 8,192 x 1/44,100 seconds, i.e. around 0.185 seconds. In this case, MozAudioAvailable will be fired six times per second.

You can observe the effect of adjusting framebuffer length in this demonstration:

Note that the event.time value of the MozAudioAvailable event gives the time point for the first sample value in the framebuffer array, relative to the start of the audio or video.

The framebuffer array is of type Float32Array. Typed arrays are a new addition to JavaScript; a Float32Array is an array of floating point numbers that can be represented in 32 bits of data. Each framebuffer array has the form [channel1, channel2, ..., channelN, channel1, channel2, ..., channelN, ...], i.e. with data for each channel interleaved. For example, a framebuffer for stereo audio will consist of the first sample for the left channel, followed by the first sample for the right channel, the second sample for the left channel, the second sample for the right channel, and so on � up to (in the default case) the 1024th sample for the left channel, and finally the 1024th sample for the right channel.

What's the frequency, Kenneth?

Given framebuffer data from the MozAudioAvailable event, how can the amplitude of a particular frequency be measured at a point in time?

In fact, the 'sound graph' shown above is a simplification. Each point on the graph actually represents the sum of an (infinite) number of component frequencies.

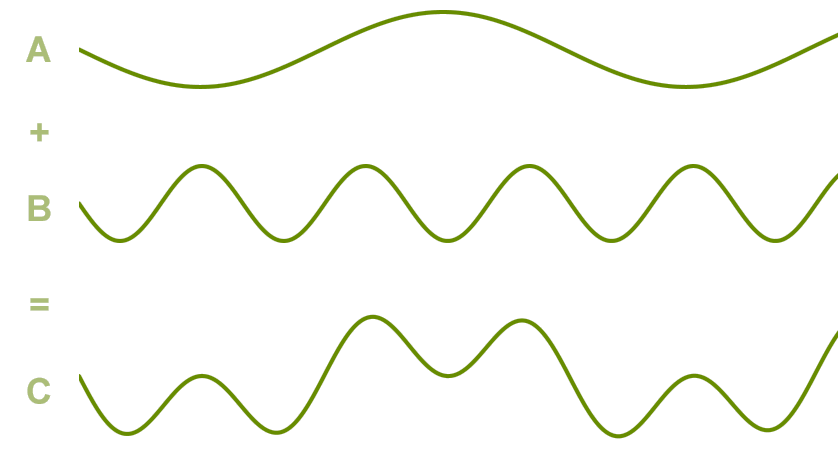

Fourier theory shows that signals like those for sounds, however complex, can be represented as the sum of an (infinite) number of sine waves.

Here is a dynamic visualisation showing the addition of two sine waves to form a more complex graph:

Fourier analysis is a set of techniques for calculating component sine waves.

For sounds, a sine wave represents a single frequency, with time on the x-axis and amplitude (wave height), corresponding to loudness, on the y-axis.

This means that Fourier analysis enables 'decomposition' of a sound (like that represented by the sound graph shown above) into component sine waves: i.e. component frequencies. The series of graphs of component frequencies produced by Fourier analysis is an approximation (unless infinite in size, which is difficult in JavaScript) but good enough for many purposes. In other words, Fourier analysis can take amplitude values for a period of time � such as those in a framebuffer array � and return the frequency composition for that period of time. (Remember that each number in each framebuffer array represents the sum of the amplitudes of all frequencies for a point in time for a particular channel.)

The following example visualises this, using a JavaScript implementation of the Fast Fourier Transform algorithm to chart the amplitude of component audio frequencies of a sound through time:

Fast Fourier Transform example

(The code in this example is adapted from the Mozilla API code samples.)

Is that all there is?

The Mozilla audio API can also be used to create audio data. This is particularly powerful given that the output of one audio element can be used as the input of another.

Simple examples of creating an audio stream are available from the Mozilla Developer Centre Audio API page.

More information and a number of more complex examples are available from the Mozilla Audio Data API list of demos.

The W3C Audio Incubator Group, of which the BBC is an Initiating Member, aims:

to explore the possibility of starting one or more specifications dealing with various aspects of advanced audio functionality, including reading and writing raw audio data, and synthesizing sound or speech

The W3C Web Audio API aims to enable common, computationally expensive digital audio tasks � such as Fourier transformations � to be done natively, via a JavaScript API, rather than having to be implemented and run purely in JavaScript (as in the example above). The proposed specification:

describes a high-level JavaScript API for processing and synthesizing audio in web applications. The primary paradigm is of an audio routing graph, where a number of AudioNode objects are connected together to define the overall audio rendering. The actual processing will primarily take place in the underlying implementation (typically optimized Assembly/C/C++ code), but direct JavaScript processing and synthesis is also supported

A Safari build implementing the Web Audio API is available from the Web Audio examples page. The Noteflight HTML5 score viewer and player prototype uses SVG with the Web Audio API to display, and play, a musical score.

Special thanks to Chris Lowis, Mark Glanville, Chris Needham and Chris Bowley.